On Fri, Jul 1, 2016 at 5:47 PM Hou Jonny <address@hidden> wrote:

Hey folks,

This that somehow related the python code I used?

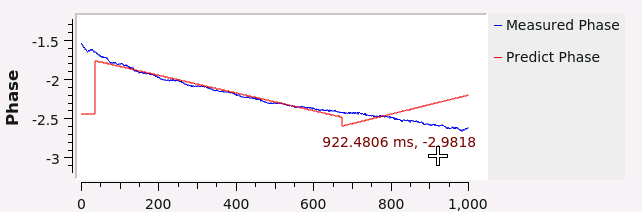

""" Embedded Python Blocks: Each this file is saved, GRC will instantiate the first class it finds to get ports and parameters of your block. The arguments to __init__ will be the parameters. All of them are required to have default values! """ import numpy as np from gnuradio import gr from sklearn.linear_model import LinearRegression class blk(gr.sync_block): def __init__(self, factor=1.0): # only default arguments here gr.sync_block.__init__( self, name='Embedded Python Block', in_sig=[np.complex64,np.complex64], out_sig=[np.float32, np.float32, np.float32] ) self.factor = factor def work(self, input_items, output_items): H = input_items[0] * input_items[1] output_items[0][:] = np.abs(H) phase = np.unwrap(np.angle(H)) output_items[1][:] = phase ##linear regression phase_x_train = np.array(range(1,len(phase)+1)).reshape(-1,1) phase_y_train = phase regr = LinearRegression() regr.fit(phase_x_train, phase_y_train) print regr.coef_, regr.intercept_, len(phase_x_train),phase_x_train[0],phase_x_train[-1] output_items[2][:] = regr.predict(phase_x_train) return len(output_items[0])Or any others information needed for further trouble shooting?

Cheers!BR//Jonny

On Tue, Jun 28, 2016 at 5:46 PM Hou Jonny <address@hidden> wrote:

Hi guys and gals,I get a question regarding buffer size when looping the file source:

Currently I intent to have an offline processing with some captured data: read the data (for example, size 1201) from file sources, then handle the data with certain filtering and so on.... After that I intend to analysis the data with linear regression in python block. While the read size of data in GNURadio could not perfectly fit the size 1201 when looping ("REPEAT"=YES) enabled, which causes the linear regression some time happen between 2 loops of read(tail of the 1st loop and head of 2nd loop) and it leads to error results of regression.I tried to adjust the max/min buffer value but it did not work as I expected. Warnings like "max output buffer set to 1536 instead of requested 1201" always popped up ...Any advice and insights?BR//Jonny