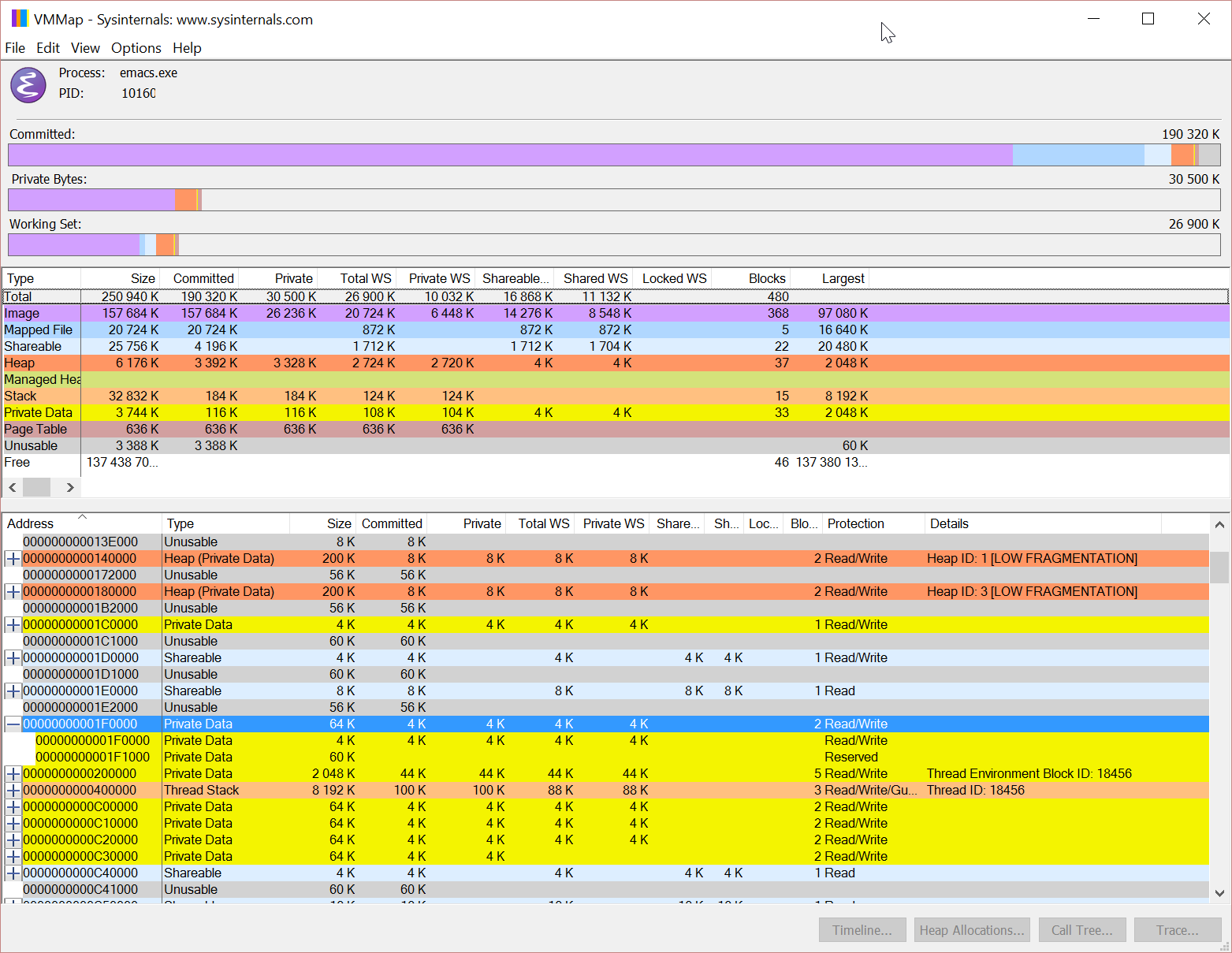

The reserved region is 4k and the 60k after are lost.

I think it is worth to try that :

diff --git a/src/w32heap.c b/src/w32heap.c

index 69706a3..db14357 100644

--- a/src/w32heap.c

+++ b/src/w32heap.c

@@ -641,12 +641,12 @@ mmap_alloc (void **var, size_t nbytes)

advance, and the buffer is enlarged several times as the data is

decompressed on the fly. */

if (nbytes < MAX_BUFFER_SIZE)

- p = VirtualAlloc (NULL, (nbytes * 2), MEM_RESERVE, PAGE_READWRITE);

+ p = VirtualAlloc (NULL, ROUND_UP((nbytes * 2), get_allocation_unit()), MEM_RESERVE, PAGE_READWRITE);

/* If it fails, or if the request is above 512MB, try with the

requested size. */

if (p == NULL)

- p = VirtualAlloc (NULL, nbytes, MEM_RESERVE, PAGE_READWRITE);

+ p = VirtualAlloc (NULL, ROUND_UP(nbytes, get_allocation_unit()), MEM_RESERVE, PAGE_READWRITE);

if (p != NULL)

{

because running with it, vmmap shows now :

and you see the 64k block is reserved, the first 4k are commited and the next 60k are usable.

Anyway, it is more correct with this patch than without it.

Fabrice